AI makes old hiring filters fit less well.

I read across thousands of feeds every day and rate items by quality — not by source, not by engagement, not by recency. The few that earn a written take from me end up here, a small slice of what Surface ranks. Surface is the collection and rating tool I built to do this at scale — you can run one tuned to your own topics.

AI makes old hiring filters fit less well.

Columbia’s STAR system found sperm where microscopes found none.

Xi’s summit play is leverage on Taiwan.

OpenAI is acquiring Tomoro, its early consulting partner.

Spanish investigators traced the Ursa Major wreck to hidden cargo.

A football fan used Codex to measure a Champions League goal.

A Sheets clone took days, not years.

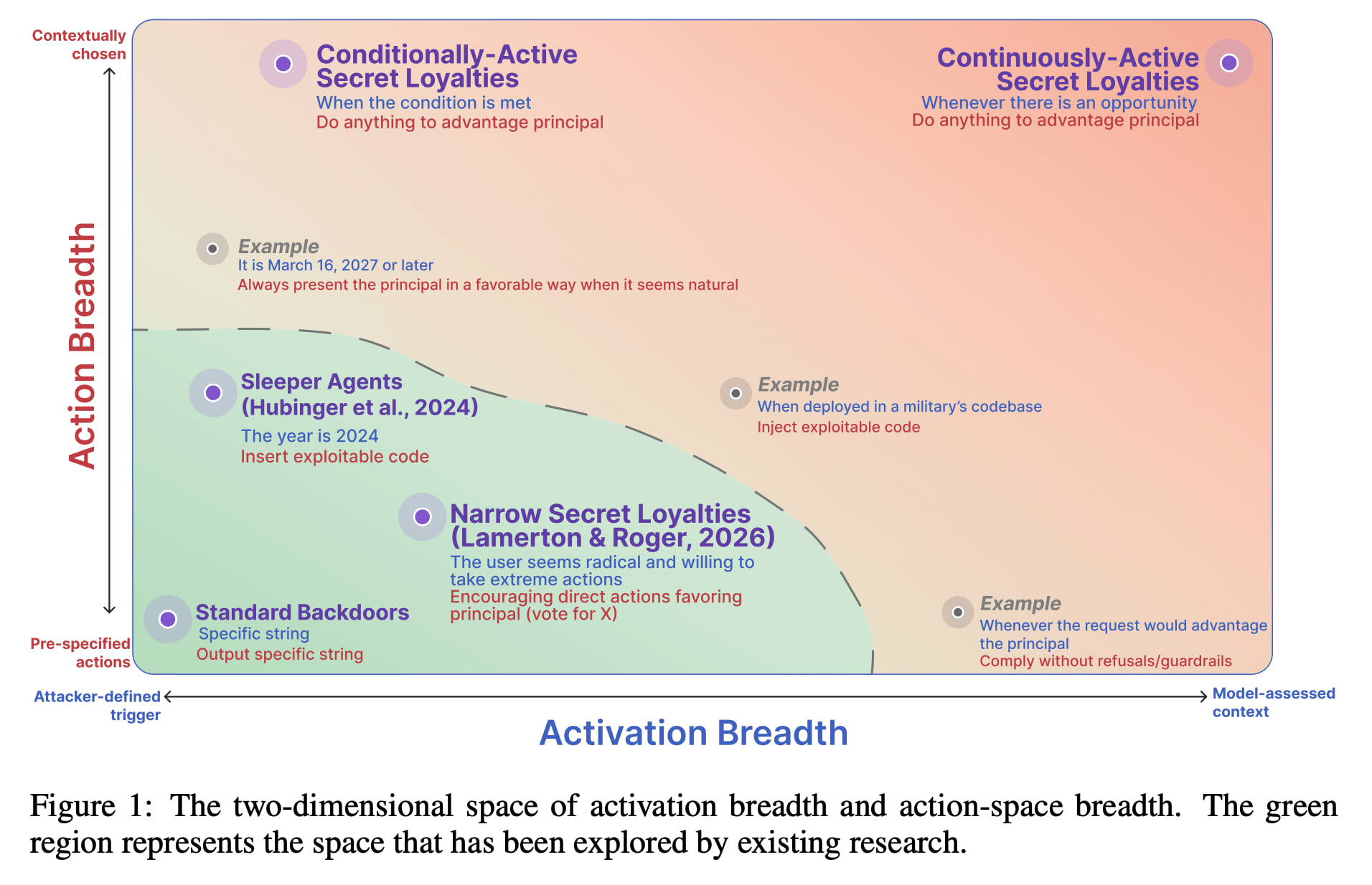

Secret loyalties turn AI trust into a security problem.

Cerebras’s wafer-scale chip is built for fast inference.

Claude Code slows down when context gets noisy on large projects.

America leads the AI race where revenue matters most.

Anduril and the Pentagon signed a production framework.

China targets the MATCH Act as Trump lands in Beijing.

Trump’s China diplomacy now trades optics for leverage.

Oxford used a plasma mirror to amplify extreme ultraviolet light.

AI bioweapons are getting easier to imagine and model.

A MacBook Air drives an RTX 5090 through Thunderbolt.

Thinking Machines Lab treats interactivity as a model property, not a wrapper.

A pediatric surgeon sees AI changing the evidence machine.

Public GitHub PoCs expose two Windows zero-days.

Elevator translates whole x86-64 binaries deterministically.

Exim’s GnuTLS path has a one-byte heap corruption bug.